Anthropic's Quiet Coup

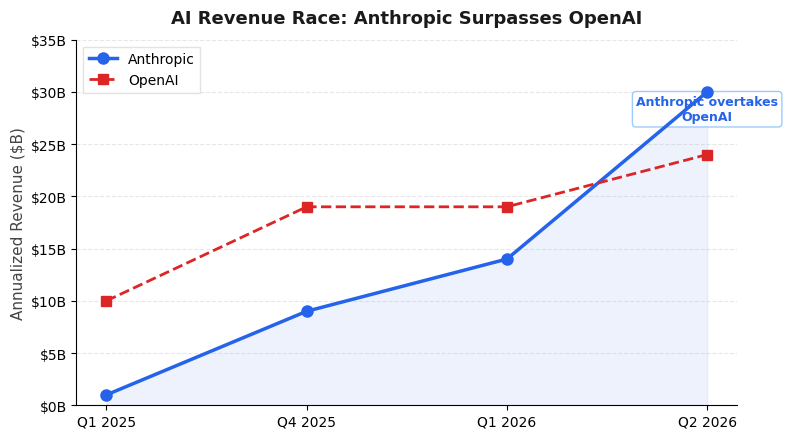

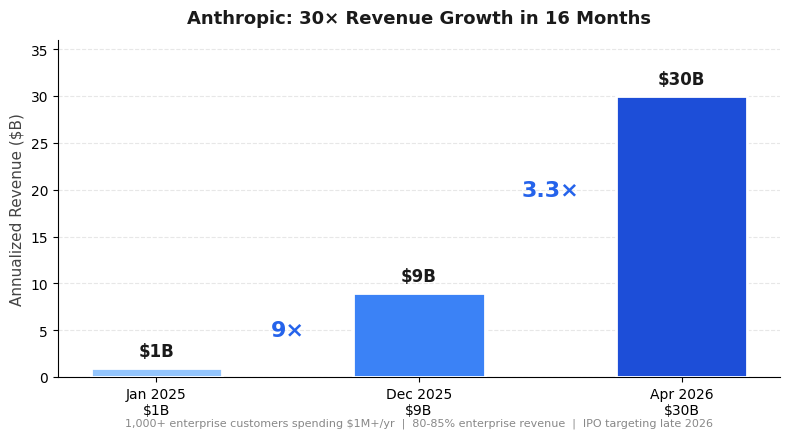

Sixteen months ago, Anthropic was doing $1 billion in annualized revenue. Today it’s at $30 billion — and it just surpassed OpenAI. That’s not a growth story; that’s a regime change. And the most interesting part isn’t the revenue number itself, it’s what Anthropic is building underneath it.

The Revenue Trajectory Is Absurd

Let’s put the numbers in context. Anthropic went from $1B (early 2025) to $9B (end of 2025) to $30B (April 2026). That’s a 30× increase in roughly 16 months. OpenAI, meanwhile, is at $24B — still growing, but Anthropic just lapped it. More than 1,000 business customers are now spending over $1 million annually, and 80-85% of revenue comes from enterprise. This isn’t prosumer ChatGPT subscriptions; it’s deep infrastructure spending from companies that have decided Claude is their AI layer.

The “run-rate” caveat matters, of course. Run-rate is a snapshot extrapolated forward, and AI revenue can be lumpy. But the direction is unambiguous: Anthropic is capturing enterprise AI spend faster than anyone else, and the gap with OpenAI is widening, not closing.

The Compute Bet That Actually Makes Sense

Here’s where it gets strategically interesting. Anthropic just signed a deal with Google and Broadcom for multiple gigawatts of next-generation TPU capacity, coming online starting 2027. Reports put the total at around 3.5 GW — a staggering amount of compute that signals Anthropic is planning for a world where inference demand dwarfs training demand.

This is a fundamentally different bet than OpenAI’s. OpenAI’s $122B funding round was largely structured as cloud commitments from Amazon, Nvidia, and SoftBank — essentially pre-paying for compute on their partners’ terms. Anthropic, by contrast, is diversifying across all three major clouds (AWS, Google Cloud, Azure) while securing dedicated TPU capacity through a direct partnership. Claude remains the only frontier model available on all three cloud platforms. That’s not an accident; it’s a deliberate distribution strategy that reduces lock-in and maximizes reach.

Amazon remains Anthropic’s primary cloud and training partner, but the Google/Broadcom deal gives Anthropic something OpenAI doesn’t have: optionality. If AWS pricing shifts or capacity constraints hit, Anthropic can route workloads to TPUs. That’s a real competitive moat in a world where compute is the binding constraint.

The IPO Calculus

Anthropic is reportedly targeting a late 2026 IPO at a $350B valuation, currently raising $10B. The gross margin trajectory is the key number for public markets: 50% expected this year, climbing to 77% by 2028. Right now, Anthropic reportedly spends close to 100% of revenue on AWS — the classic infrastructure company margin trap. But if the margin projections hold, Anthropic could be a genuinely profitable AI company by the time it lists.

The question is whether enterprise AI margins actually sustain at 77%. History suggests that platform companies (AWS, Azure, GCP) eventually commoditize the infrastructure layer and capture the margin themselves. Anthropic’s bet is that model quality and the Claude ecosystem (Claude Code, Claude Cowork, agentic tooling) create enough differentiation to hold pricing power. The compute moat — owning TPU capacity rather than renting GPU capacity — is part of that play.

What This Means

Anthropic’s overtaking of OpenAI isn’t just a revenue milestone. It reflects a company that has made three smart bets simultaneously: enterprise-first go-to-market, multi-cloud distribution, and dedicated compute infrastructure. OpenAI still has brand recognition and consumer reach, but Anthropic is building the plumbing that enterprise AI actually runs on. In infrastructure markets, plumbing usually wins.

The 3.5 GW compute deal is the most underappreciated part of this story. In a world where every AI company is compute-constrained, Anthropic just locked in capacity through 2027 and beyond — on hardware it controls, not hardware it rents. That’s the difference between being a customer of the cloud and being a peer.