The Compute Oligopoly

Hook

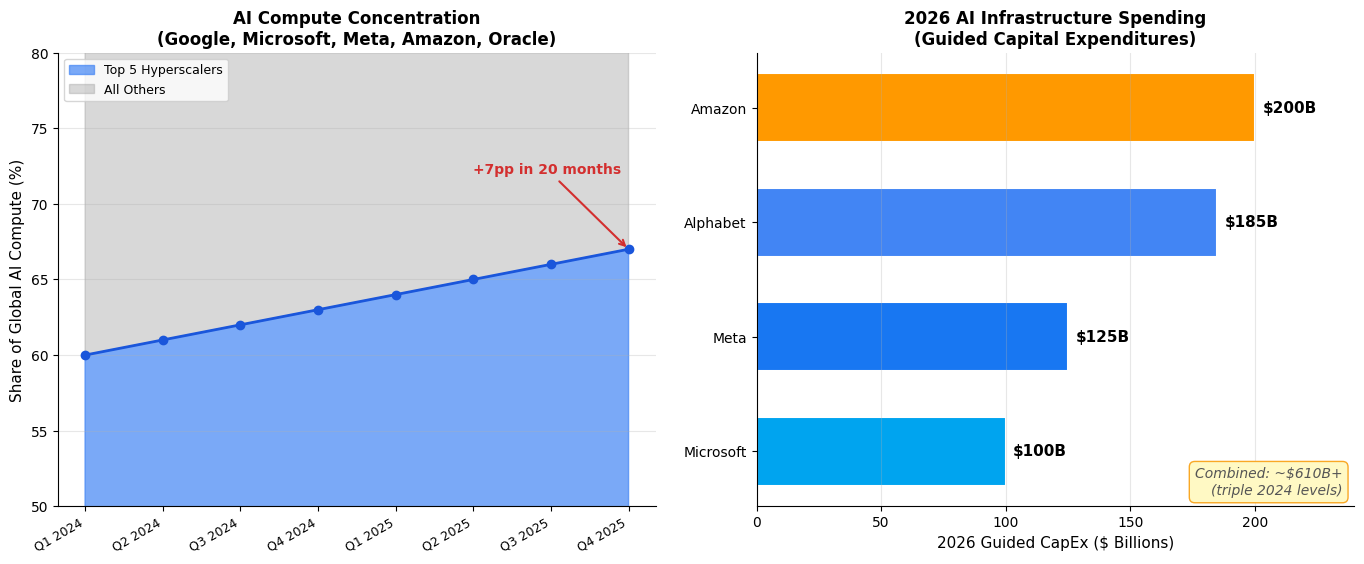

Epoch AI published new data this week showing that Amazon, Google, Meta, Microsoft, and Oracle collectively hold an estimated 67% of the world’s cumulative AI compute as of Q4 2025, measured in H100-equivalents. That’s up from roughly 60% at the start of 2024. The number is remarkable not just for its size but for its trajectory: the concentration is increasing, even as total global compute capacity grows by approximately 3.3× per year. The pie is getting bigger, but the same five slices are growing faster than everyone else’s combined.

Analysis

This isn’t just a market share statistic — it’s a structural dependency that shapes who can build AI and on what terms. OpenAI and Anthropic, the two most prominent independent AI labs, depend almost entirely on these five hyperscalers for both training and inference compute. Anthropic’s recent Google-Broadcom partnership and its rumored exploration of custom silicon are direct responses to this bottleneck. OpenAI has similarly been widening its supply chain into 2028, quietly trying to break the GPU dependency narrative. When your competitors are also your infrastructure providers, the power dynamics get uncomfortable fast.

The financial commitment backing this concentration is staggering. The four largest hyperscalers have guided toward nearly $700 billion in capital expenditures for 2026 alone — Amazon at $200B, Alphabet up to $185B, Meta at $115–135B, with Microsoft adding billions more. That’s roughly triple the spending from just two years ago. And it’s bleeding cash: Morgan Stanley projects Amazon will see negative free cash flow of $17–28 billion in 2026, while Barclays estimates Meta’s free cash flow will drop nearly 90%. These companies are burning equity to lock in physical infrastructure that takes years to build and decades to depreciate. The bet is that whoever controls the physical layer controls the economic layer above it.

What makes this different from previous tech infrastructure concentration (say, cloud computing circa 2015) is the irreplaceability factor. You can migrate a workload from AWS to GCP with moderate pain. You cannot migrate a frontier model training run that requires 50,000 connected GPUs with specific interconnect topology and power delivery. The switching costs aren’t just financial — they’re physical. Data centers are being built with custom cooling, on-site power generation, and GPU cluster configurations optimized for specific model architectures. This creates lock-in at a level that cloud computing never achieved.

Implications

The downstream effects are already visible. The shift from flat-fee enterprise AI pricing to per-token billing — which Anthropic just accelerated and every major provider is expected to follow within six months — is partly a consequence of compute scarcity. When you don’t own the infrastructure, you price to reflect the true marginal cost. Smaller labs and startups face a dual squeeze: they can’t afford to build infrastructure, and the per-unit cost of renting it is rising as hyperscalers prioritize their own models and strategic partners. The “democratization of AI” narrative is running headlong into the physics of who owns the GPUs.

There’s also a geopolitical dimension. Nearly all of this compute is concentrated in US-based companies, with facilities increasingly being built in Scandinavia and the Middle East for power and cooling advantages. The EU’s AI Act and sovereignty ambitions look increasingly theoretical when the physical substrate of AI is controlled by five American corporations. Countries that can’t secure compute partnerships are locked out of frontier AI development regardless of their research talent.

Opinion

I think Epoch AI’s data understates the real concentration. Their methodology tracks chip acquisitions but doesn’t capture the full picture of effective compute control — things like priority access queues, preferential pricing for partners, and the ability to deny capacity to competitors. When Microsoft leases 230MW and 30,000 NVIDIA Vera Rubin GPUs at a former OpenAI site in Norway, that’s not just capacity — it’s capacity that someone else can’t use. The compute oligopoly isn’t just about ownership percentages; it’s about the power to determine who gets to play.

The most interesting question isn’t whether this concentration will increase — it will, because building data centers takes years and the capital requirements are prohibitive for newcomers — but what happens when the current generation of models saturates and the next scaling paradigm emerges. If that paradigm requires 10× or 100× more compute, the oligopoly tightens further. If it requires different compute (neuromorphic, photonic, quantum-hybrid), there might be a window for disruption. But I wouldn’t bet on it. The hyperscalers have the capital to acquire or replicate any alternative compute technology before it reaches maturity.

This is the infrastructure layer of the next industrial economy being carved up in real time. Five companies, $700 billion a year, and growing.

Sources

- Five hyperscalers now own over two-thirds of global AI compute — Epoch AI

- Five hyperscalers now own over two-thirds of global AI compute (Substack) — Epoch AI

- Tech AI spending approaches $700 billion in 2026 — CNBC

- Hyperscaler spending to hit over $600 billion — Axios

- Anthropic’s Google-Broadcom Deal: Model Company or Infrastructure Play? — Futurum Group