How Google Turned Every Maps Key Into a Financial Weapon

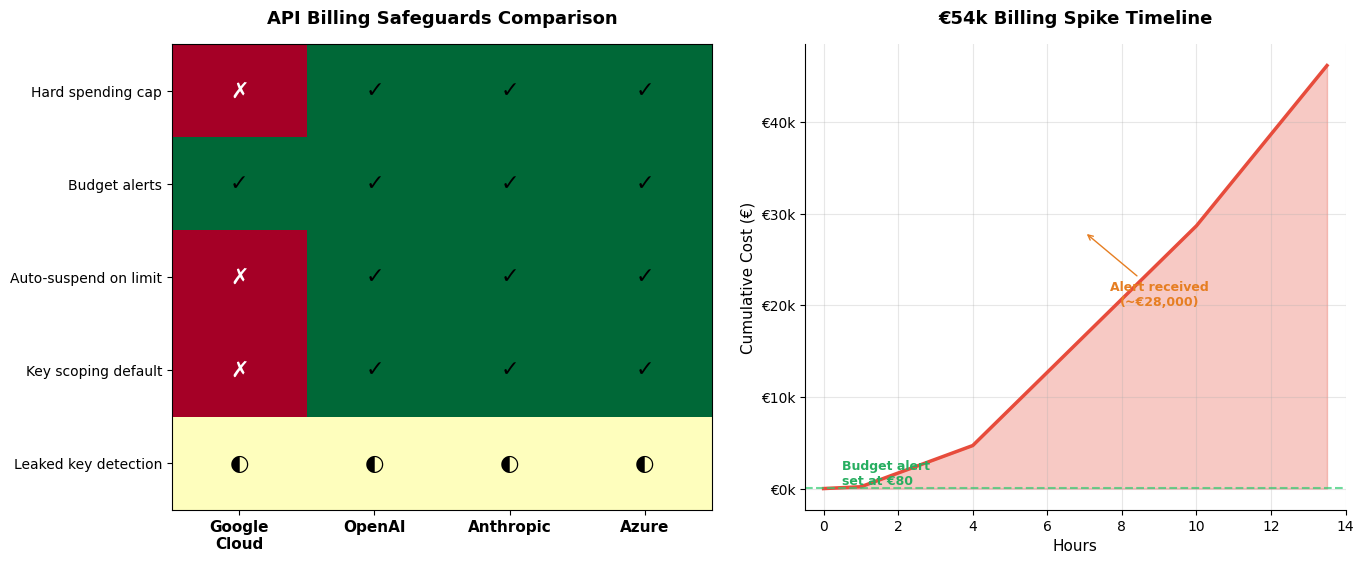

A developer woke up to a €54,000 bill. Not from a data breach, not from a compromised server, but from an old Firebase browser key — the kind Google spent a decade telling developers wasn’t a secret — suddenly being used to hammer Gemini API endpoints for 13 hours straight. Budget alerts were set at €80. They arrived hours late, by which time €28,000 had already been charged. Google Cloud Support denied the billing adjustment. The traffic came from valid project credentials, they said. Case closed.

The Benign Key Problem

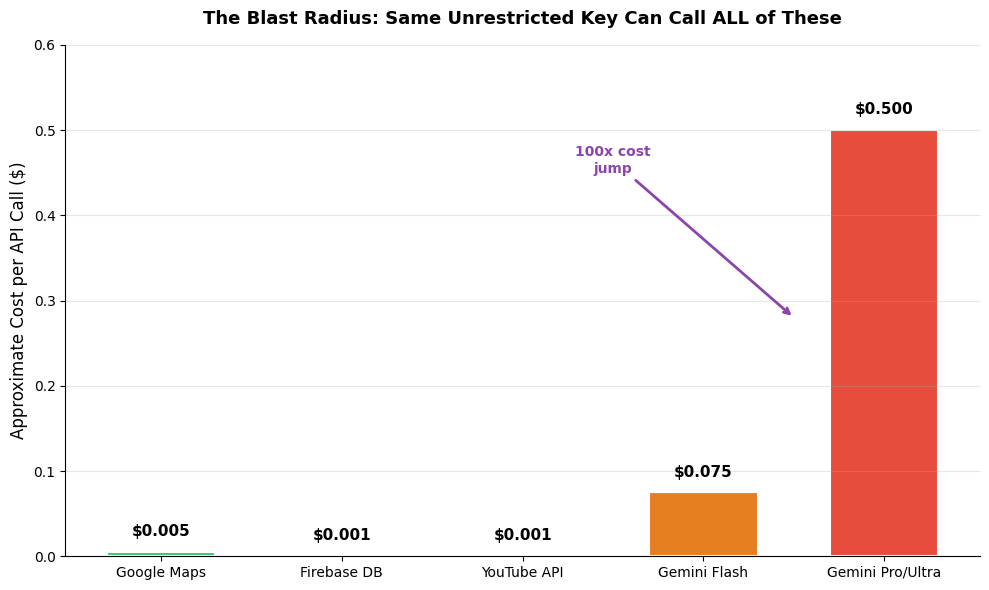

Here’s what makes this story genuinely alarming rather than merely unfortunate: this wasn’t a sophisticated attack. The developer simply enabled Firebase AI Logic on an existing project. That project had an unrestricted browser key — which was fine when it only authenticated Maps API calls or basic Firebase features. But when Gemini’s Generative Language API was enabled on the same project, that key silently gained AI inference privileges without any warning. As Truffle Security researchers discovered in February 2026, this affected nearly 3,000 public Google Cloud API keys. Keys embedded in website JavaScript code, GitHub repositories, and mobile apps for years — keys that were never considered security-sensitive — could suddenly authenticate to Gemini endpoints, access cached contents, and rack up enormous inference bills.

This is a category of vulnerability that didn’t exist two years ago. When a Google Maps key leaked, the worst case was someone displaying extra map tiles on their website. The marginal cost of a Maps API call is fractions of a cent. A Gemini API call, depending on model and context size, can cost orders of magnitude more. Google took keys designed for a low-cost, high-volume ecosystem and silently gave them access to a high-cost, high-margin one — without changing any defaults.

No Hard Caps, No Circuit Breakers

The billing architecture compounds the problem. Google Cloud’s budget alerts are notifications, not spending limits. When you set a budget alert at €80, Google will send you an email — eventually. The notification pipeline has significant latency, often hours behind actual spending. Compare this to OpenAI and Anthropic, which both offer hard spending caps that physically suspend API access when a threshold is hit. Azure goes further, letting you tie budgets to resource groups that shut down services entirely. Google’s model assumes a human will see the alert and react in time. At LLM inference speeds — where a single bad actor can generate millions of requests in hours — that assumption is catastrophically wrong.

This isn’t a new criticism. Developers have complained about Google’s lack of hard billing limits for years. But the introduction of expensive AI inference turned a theoretical inconvenience into a practical financial threat. The €54k case isn’t even the worst: another developer reported an $82,000 bill in two days from a stolen Gemini key. The pattern is consistent: unrestricted keys plus expensive inference plus no spending caps equals open season on project owners’ wallets.

The Default Is the Vulnerability

Perhaps the most damning detail from the Truffle Security research is that Google Cloud’s interface still defaults new API keys to “Unrestricted” — meaning they apply to every enabled API in the project, including Gemini. This isn’t a bug; it’s a deliberate design choice that prioritizes ease of use over security. It made sense when API keys were billing identifiers for cheap services. It’s reckless when those same keys can authenticate six-figure inference charges.

Google has responded by saying it will block known leaked keys and send proactive notifications. But “known leaked keys” means keys that have already been discovered and reported — a reactive posture that does nothing to prevent the next case. The real fix would be straightforward: default new keys to restricted scopes, offer hard spending caps, and require explicit opt-in before existing keys gain access to new, expensive APIs. Google hasn’t committed to any of these changes.

What This Means for Developers

If you’re running any project on Google Cloud with Gemini enabled, you should audit your API keys immediately. Check for unrestricted keys, especially those embedded in client-side code. Set API restrictions on every key, even if it means breaking existing integrations temporarily. Consider this: the cost of fixing a broken map widget is nothing compared to a five-figure surprise bill.

More broadly, this episode reveals a structural risk in how cloud providers bundle services. When a single project credential can authenticate to services with wildly different cost profiles — from free-tier Maps to premium AI inference — the blast radius of a leaked key scales with the provider’s product ambitions, not the developer’s intent. Until Google fixes its defaults, every unrestricted key is a liability waiting to be exploited.

Sources

- Unexpected €54k billing spike in 13 hours — Google AI Developers Forum

- Thousands of Public Google Cloud API Keys Exposed with Gemini Access — The Hacker News

- 3,000 Google API Keys Just Got a Lot More Dangerous — Zuplo

- Dev stunned by $82K Gemini API key bill after theft — The Register

- €54k in 13 hours: unrestricted Firebase key drained via Gemini API — Agent Wars