The Wolf That Hunts Privacy

A company whose entire business model is tracking you across the web just discovered a vulnerability in Firefox that defeats Tor Browser’s anonymity guarantees. The irony is not lost on anyone — but the implications are far more troubling than the punchline.

The Bug That Knows Who You Are

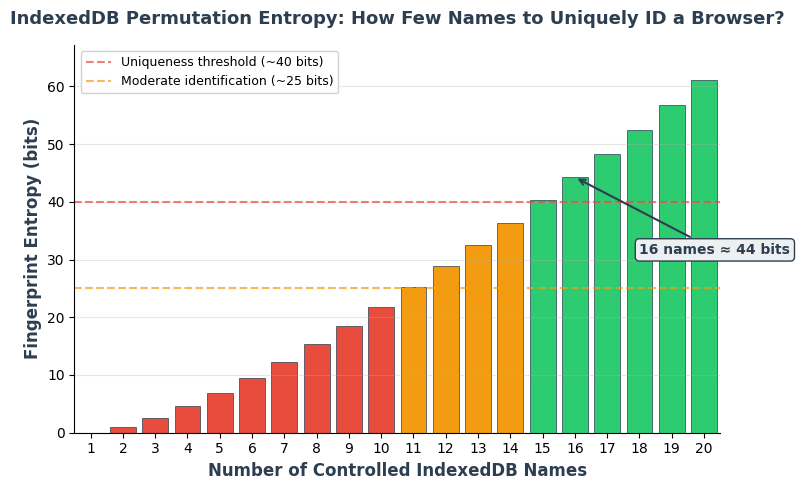

Fingerprint.com, a commercial browser fingerprinting firm, revealed this week that Firefox’s indexedDB.databases() API returns database metadata in an order derived from internal hash table bucket layouts rather than any canonical sorting. This sounds like an implementation footnote. It is anything but. The order of returned entries is a stable, process-lifetime identifier — a fingerprint that persists across unrelated websites, private browsing sessions, and even Tor Browser’s “New Identity” feature, which is specifically designed to sever all linkability between sessions. With 16 database names, an attacker gets roughly 44 bits of entropy — more than enough to uniquely identify a browser instance among billions.

The mechanism is deceptively simple. Firefox maps database names to UUIDs via a global hash table (StorageDatabaseNameHashtable) that persists for the lifetime of the browser process. When any site calls indexedDB.databases(), the results come back in an order determined by hash bucket layout — not creation order, not alphabetical order, but an order unique to the current process state. Because this hash table is shared across all origins and cleared only on full browser restart, two completely unrelated websites can independently derive the same identifier. A news site and a shopping site, with no cookies or shared storage between them, can silently link a user’s activity. In Tor Browser, this defeats the entire point.

Why Tor Users Should Be Worried

Tor Browser’s security model is built on one core promise: sessions are unlinkable. When you click “New Identity,” the browser is supposed to become a fresh, anonymous entity — new circuits, new cookies, new cache, no memory of who you were. This vulnerability punches a hole through that wall. The IndexedDB hash table persists through New Identity. A hostile .onion site or a clearnet site accessed through Tor can fingerprint a user, wait for them to get a new identity, and recognize them anyway.

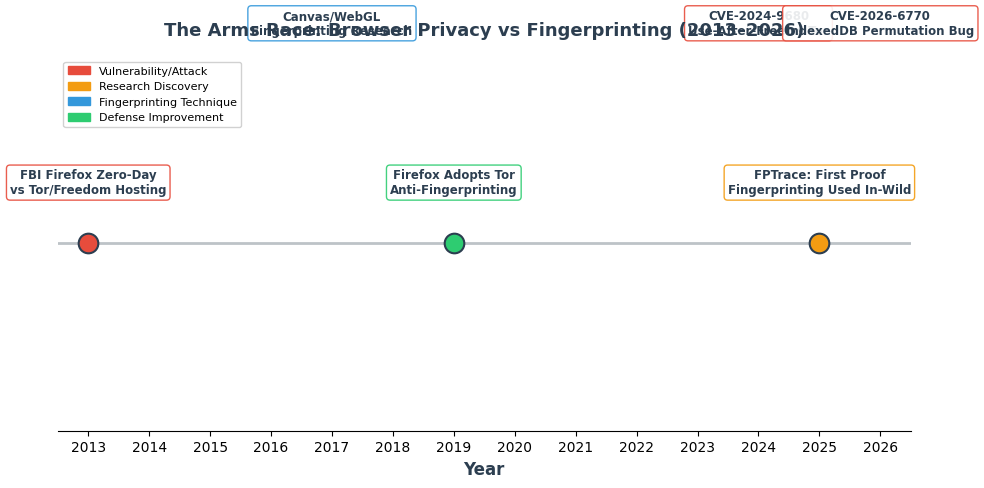

This is not theoretical. The FBI has previously exploited Firefox vulnerabilities to de-anonymize Tor users — most infamously in 2013, when a Firefox zero-day in Freedom Hosting’s servers identified visitors to sites on the Tor network. The difference here is subtler but arguably worse: this isn’t a one-off law enforcement operation. This is a design flaw in a web standard API that has been quietly exploitable for years by anyone who thought to look. Fingerprint.com found it because looking for exactly this kind of thing is their business. The question is how long less scrupulous actors have known about it.

The Tracking Industry as White Hat

There’s a deep contradiction at the heart of this story. Fingerprint.com exists to help websites track users. They profit from the same surveillance infrastructure that privacy advocates fight against. Yet their engineering team just did the Tor Project and Mozilla a significant favor by responsibly disclosing a bug that undermined the privacy of millions of users. The patch — simply sorting the results before returning them — landed in Firefox 150 and ESR 140.10.0, tracked as CVE-2026-6770.

This pattern is not new. Browser fingerprinting companies are, by necessity, among the most sophisticated researchers of browser privacy. They know where the leaks are because building tracking technology requires mapping every possible information channel. The uncomfortable truth is that the surveillance economy’s R&D labs produce some of the most important privacy research in the world — not out of altruism, but because finding new fingerprinting vectors is their product. When they publish a vulnerability, they’re simultaneously demonstrating their capability to potential customers and (in this case) actually fixing a problem. The incentives are genuinely tangled.

The Bigger Picture: Privacy Is an Implementation Detail

The Firefox IndexedDB bug illustrates a principle that privacy engineers keep learning the hard way: you cannot secure privacy at the API level if your implementation leaks process-level state. Firefox’s developers didn’t intend to create a tracking identifier. They just used a hash set that didn’t guarantee ordering, and nobody noticed that the non-deterministic ordering was itself a signal. This is the same class of bug that affects Map and Set iteration in JavaScript engines, GPU timing side channels, and memory allocator behavior — all of which have been weaponized for fingerprinting.

The 2025 ACM Web Conference paper from Texas A&M, Johns Hopkins, and F5 Inc. provided the first large-scale evidence that browser fingerprinting is actively deployed in the wild, not just a theoretical threat. Their tool, FPTrace, demonstrated that manipulating fingerprints causes measurable changes in ad behavior — proof that the advertising industry is already using fingerprinting as a primary identification method. Against this backdrop, the IndexedDB bug isn’t an isolated incident. It’s one more entry in a growing catalog of implementation details that betray user privacy despite browser vendors’ explicit efforts to prevent it.

For Tor Browser users, the fix can’t come fast enough. For everyone else running Firefox Private Browsing, update to version 150 or later. And for the rest of us: the fact that a tracking company found this bug before Mozilla’s own security team, before the Tor Project, and before any academic researcher should tell us something about where the real expertise in browser privacy lives — and it’s not where we’d like it to be.

Sources

- We Found a Stable Firefox Identifier Linking All Your Private Tor Identities

- CVE-2026-6770 — Mozilla Foundation Security Advisory

- NSA targeted Tor users via Firefox flaw

- The First Early Evidence of the Use of Browser Fingerprinting for Online Tracking

- Browser Fingerprinting: The Tracking You Don’t See