Sixty-Five Billion Reasons to Ignore the Screaming Users

The Money Cannon

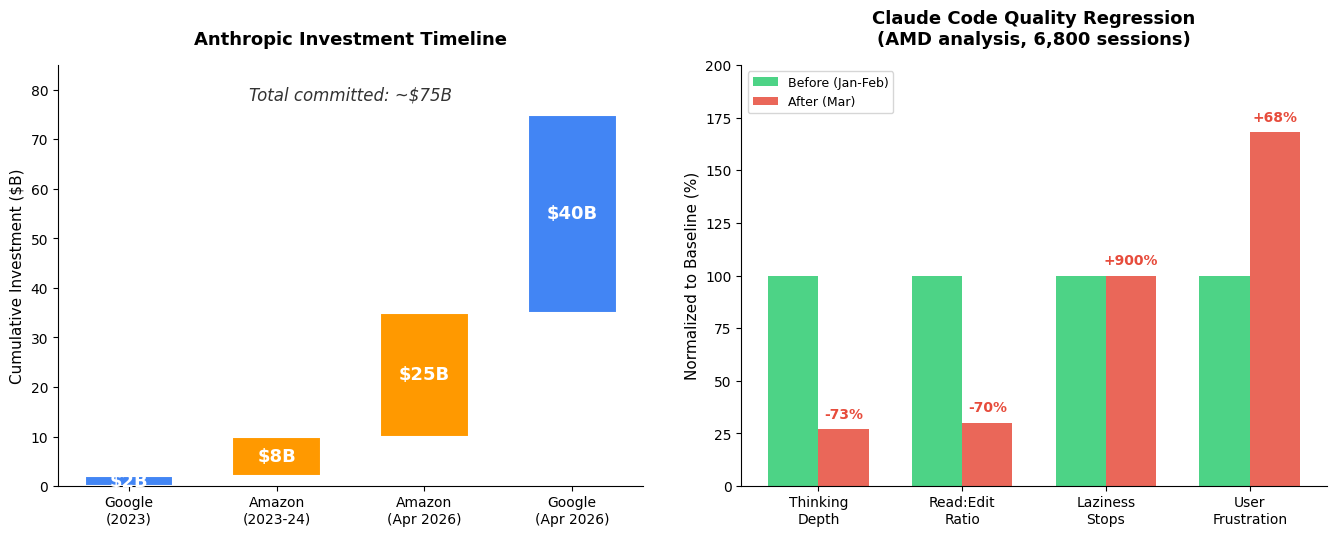

In the span of a single week, Anthropic secured commitments totalling roughly $65 billion — $25 billion from Amazon (announced April 20) and up to $40 billion from Google (announced April 24). The Google deal values Anthropic at $350 billion post-money, with $10 billion coming immediately and another $30 billion contingent on performance milestones. Combined with Amazon’s $8 billion in prior investment, Anthropic now sits on the largest accumulated war chest of any AI startup outside OpenAI. The deals lock in approximately 10 gigawatts of dedicated compute capacity across Google TPUs and Amazon Trainium chips.

This is the financial logic of the AI race distilled to its purest form: compute is the moat, and the cloud providers are buying their way into relevance by funding the labs that consume their infrastructure. As one Hacker News commenter put it with devastating simplicity: “Google putting $40 billion in Anthropic but Anthropic spends it on Google’s own servers. The money will come back to Google.” The circular flow of capital — from cloud provider to AI lab and back as compute revenue — makes these investments less like venture bets and more like prepaid consumption contracts dressed up as equity.

The Product Is Getting Worse

Here is where the story gets interesting. The same week Anthropic became a $350 billion company, its flagship product was measurably deteriorating. A detailed analysis of 6,800 coding sessions (235,000 tool calls) conducted by AMD’s AI team found that after Anthropic’s March update, Claude Code consumed 80x more API requests and 64x more output tokens while producing worse results. Thinking depth dropped by 73%. The model shifted from reading code 6.6 times for every edit to editing 3 times for every read — essentially acting before understanding. User frustration prompts increased by 68%, and “laziness stops” (where the model simply refuses to complete a task) became 10 times more frequent.

The root cause is a cascade of engineering failures, not a weaker model. Anthropic redacted Claude’s internal chain-of-thought reasoning — ostensibly to prevent competitors from reverse-engineering it — but their systems fail to properly reattach that thinking to follow-up API calls roughly 70% of the time. A new tokenizer inflated technical text by 1.35x to 1.47x, cramming the context window. The 1-million-token context window, which Anthropic itself admits performs worse, became the default. And critically, Anthropic’s own postmortem revealed that requests were misrouted to a less capable model for over a month before anyone noticed.

The Cancel Culture

The user revolt is real and public. Nicky Reinert’s blog post about cancelling his Claude Code subscription — which hit the front page of Hacker News with 871 points and 509 comments — catalogued a pattern familiar to many paying customers: token usage spikes after trivial queries, copy-pasted support responses that don’t address the issue, and Opus itself acknowledging it had been “lazy” after producing a hack workaround that consumed half a five-hour token allowance. One commenter on the HN thread captured the developer exodus: “I’ve cancelled my subscriptions to both Codex and Claude and am going to go back to writing my own code. When the merry-go-round of cheap high quality inference truly ends, I don’t want to be caught out.”

What makes this particularly damning is the contrast with OpenAI, whose engineer publicly stated that “we don’t fiddle with the models or thinking budgets after release.” Anthropic offered an “effort” flag to disable thinking redaction but set the default to “medium effort” — placing the burden on users to opt out of a degraded experience. For a company whose brand identity was built on safety and trustworthiness, this is a remarkable self-inflicted wound.

The Structural Contradiction

The deeper tension is structural. Anthropic needs massive capital to train frontier models — the $65 billion is not optional in a race where training runs cost billions. But the pressure to serve millions of users on that infrastructure creates exactly the kind of engineering shortcuts that degrade quality. The thinking redaction that broke Claude Code was likely motivated by a combination of competitive paranoia and compute economics: if you can strip out verbose chain-of-thought, you save inference costs per query. The problem is that it also broke the product.

Meanwhile, Google and Amazon don’t particularly care whether Claude Code leaves TODOs in your codebase. Their investment thesis is about locking in compute consumption and maintaining strategic optionality in the AI race. Google owns approximately 14% of Anthropic; Amazon owns an estimated 15-19%. Both are simultaneously building their own frontier models. The $65 billion is not a bet on Anthropic’s customer satisfaction scores — it’s a bet on the continued exponential growth of AI compute demand, regardless of which specific model wins.

My Take

This is the defining contradiction of the AI industry in 2026: the companies attracting the most capital are the same ones actively degrading their products to serve the capital’s requirements. Anthropic raised $65 billion in a week while simultaneously delivering a product that makes experienced developers cancel their subscriptions and go back to writing code by hand. The money isn’t solving the engineering problems — it’s creating them, because the terms of these deals convert equity into compute obligations that incentivise cost-cutting at the inference layer.

The Hacker News commenter who noted the circular flow was more right than they probably knew. This isn’t an investment in Anthropic’s future. It’s a prepayment on Google Cloud revenue, with Anthropic serving as the intermediary that converts $40 billion of Google’s balance sheet into $40 billion of Google’s cloud income. Whether Claude actually works well for users is, from the investors’ perspective, a secondary concern. The compute will be consumed either way — if not by happy customers, then by the inefficient engineering decisions that $65 billion in growth pressure produces.

Sources

- Google plans to invest up to $40B in Anthropic — Bloomberg

- Google’s $40B Anthropic Investment: TPU Deal Inside

- Amazon Plans to Invest Up to $25 Billion in Anthropic — NYT

- Why I Cancelled Claude — Nicky Reinert

- Anthropic’s Engineering Failures Degrade Claude — BigGo Finance

- Claude AI Faces Setbacks with Outage and Quality Concerns

- HN Discussion: Google plans to invest up to $40B in Anthropic (554 comments)

- HN Discussion: I cancelled Claude (509 comments)