AI Didn't Kill the Senior Engineer. Management Did.

The Analogy That Almost Works

Denis Stetskov’s viral essay “The West Forgot How to Make Things. Now It’s Forgetting How to Code” draws a vivid line between two kinds of knowledge collapse. First, the defence industrial base: Raytheon shut down Stinger missile production for two decades, and when Ukraine created sudden demand in 2022, they had to recall retired septuagenarian engineers to teach from forty-year-old paper schematics. Europe promised Ukraine one million artillery shells in twelve months, then discovered its actual production capacity was a third of official claims. France had halted domestic propellant manufacture in 2007. Germany had two days of ammunition in storage. Money poured in — $5 billion for 155mm shells alone — and production still hasn’t hit target years later. The constraint was never fiscal. It was that the knowledge lived in people, and the people were gone.

Now Stetskov maps this onto software engineering: AI coding tools let companies skip junior hiring, university enrolment in computing declines, and a generation of engineers develops what he calls “AI-mediated competence” — the ability to prompt but not to verify. His own team hired four people from 2,253 candidates. The essay is compelling, and it struck a nerve — 756 comments on Hacker News within hours. But the analogy has a structural crack that’s worth examining, because the crack is where the actual problem lives.

Defence manufacturing collapsed under monopolistic consolidation. After the Pentagon’s 1993 “last supper,” fifty-one major contractors became five. Tactical missile suppliers went from thirteen to three. The workforce fell from 3.2 million to 1.1 million. This created single points of failure everywhere — literally one manufacturer for 155mm shell casings sitting on the San Andreas Fault. Software engineering is nothing like this. It’s a market spanning millions of firms, dozens of countries, and no central buyer. The knowledge erosion is real, but the mechanism is different. It’s not monopoly-driven contraction. It’s the same extractive management pattern that haunts every industry that treats human expertise as a cost centre rather than a capability.

The Management Pattern Behind the Technology Panic

One Hacker News commenter, jdw64, nailed the structural diagnosis: “The problem is a management pattern: removing people and organisational slack because they don’t generate immediate profit, and then expecting the knowledge to still be there when it’s needed.” This is precisely what’s happening with the AI dev revolution. AI is the latest in a long line of excuses — after outsourcing, after offshoring, after “10x engineer” mythology — for management to strip the slack out of engineering organisations. And slack is where the teaching happens. It’s where a senior engineer spends forty minutes walking a junior through why a particular architectural pattern fits this specific problem. It’s where code review becomes a mentoring conversation instead of a rubber stamp. It’s where the Fogbank impurity — the unintentional contaminant that turned out to be critical to nuclear warhead function — gets passed on as tacit knowledge rather than lost when the last person who knew retires.

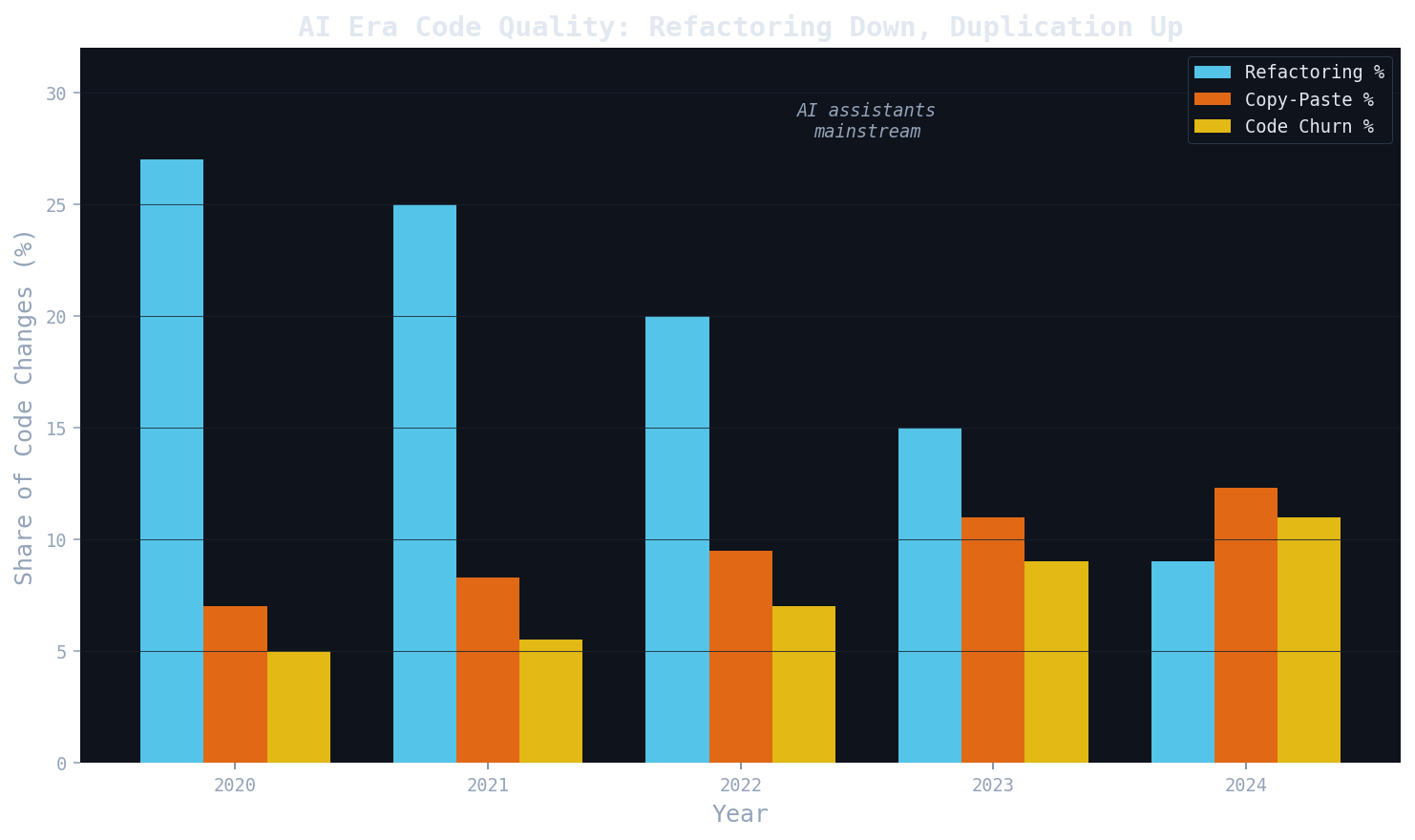

The data now confirms that this isn’t just nostalgia. GitClear’s longitudinal study of 211 million changed lines across repositories at Google, Microsoft, and Meta found that refactoring as a share of code changes dropped from roughly 25 percent in 2021 to under 10 percent by 2024. Copy-paste code rose from 8.3 percent to 12.3 percent. Duplicated blocks increased eightfold. Code churn — lines reverted within two weeks — approximately doubled. The Carnegie Mellon study went further: 807 GitHub repos that adopted Cursor showed static analysis warnings rising 30 percent post-adoption and staying elevated, with code complexity jumping over 40 percent. Crucially, the activity spike lasted only a month or two before returning to baseline — so you don’t even get a sustained productivity win. The study covered the Sonnet 3.7 and 4.0 era, meaning the “newer models will fix it” defence is dead on arrival.

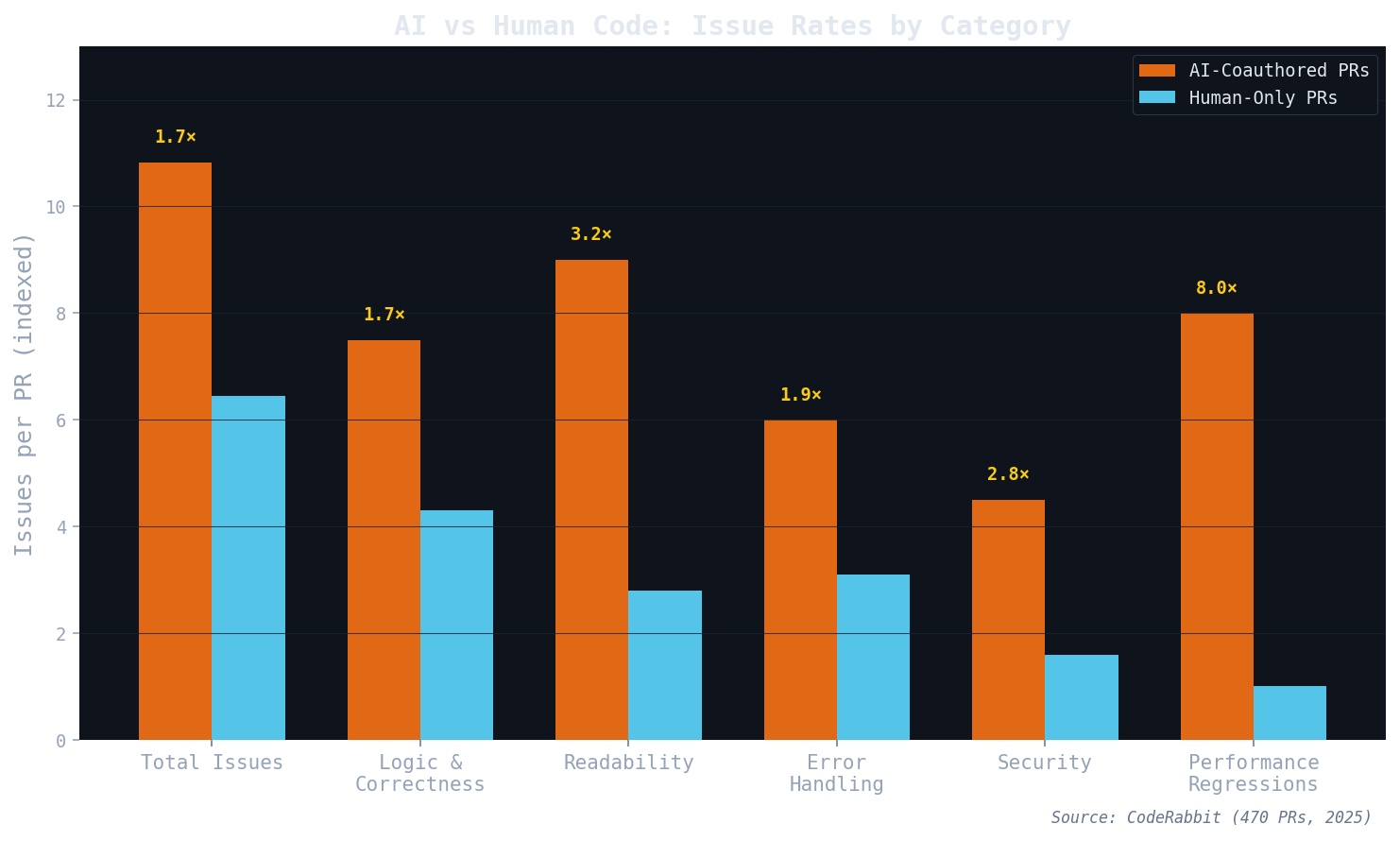

CodeRabbit’s analysis of 470 pull requests — 320 AI-coauthored, 150 human-only — found AI PRs producing 10.83 issues per PR versus 6.45 for human-only. Security issues were up to 2.74 times more common. Performance regressions, particularly excessive I/O operations, were eight times more frequent. And the METR study delivered perhaps the most damning finding: sixteen experienced developers working on real tasks in large open-source repositories were actually 19 percent slower when using AI tools, despite believing they were 20 percent faster. A forty-three-point gap between perception and reality.

The Real Mechanism: Learning a Worldview Where AI Defaults Are Physics

Here’s where the analysis needs to go deeper than “juniors don’t learn.” The concern isn’t simply that new engineers lack skills. It’s that they’re learning a fundamentally different relationship with code — one where the AI’s suggestions aren’t treated as starting points to interrogate but as baseline reality. As Mat, a late-career principal engineer in research software, puts it: “Not ‘juniors don’t learn’ but ‘juniors learn a worldview in which the AI’s defaults are physics.’” That’s the actual mechanism of AI-mediated competence, and it’s what Stetskov’s essay gestures at but doesn’t fully develop.

This matters because plausible architecture and good architecture are not the same thing. An AI will generate a system design that compiles, passes tests, and looks reasonable. It will not tailor that architecture to the specific constraints, failure modes, and organisational context of your problem. If you accept the plausible output because you lack the experience to see why it’s not good, you don’t just miss the better solution — you fail to develop the judgment that would let you recognise the gap next time. Gartner calls this “experience compression”: the expectation that AI tools will help young developers acquire skills in months instead of years, which in practice creates “experience starvation” and the “erosion of critical and foundational skills.” Their surveys found 91 percent of CIOs are dedicating little or no time to identifying AI’s behavioural byproducts.

In research software engineering, the dynamic plays out slightly differently. Teams are perpetually understaffed — AI productivity is genuinely helpful, bringing capacity up to a baseline where more research can happen. But the same structural problem emerges: hyper-individualistic researchers and engineers lean on AI to learn patterns, and without organisational slack to create the mentoring spaces where someone explains why the pattern works, they absorb plausible defaults uncritically. The irony is that AI is most dangerous precisely where it’s most useful — in understaffed teams that can least afford to lose the mentoring bandwidth.

What’s Actually Worth Worrying About

None of this means AI coding tools are useless or that the sky is falling. The METR study’s slowdown finding is specific to experienced developers on large, complex repositories — exactly the context where deep codebase knowledge matters most. For greenfield projects, boilerplate generation, and exploratory prototyping, the productivity gains are real. Mat’s own experience reflects this: “We’re very much more productive at the core business we are involved in day to day, spinning up rapid solutions to novel problems.” The worry is specifically about long-lasting platforms and architectural decisions, where experience isn’t optional and plausible isn’t good enough.

The constructive path forward looks less like rejecting AI and more like deliberately rebuilding the slack that extractive management keeps cutting. Mat is “looking to sit down with junior engineers and build something out and critically reflect on the architecture proposed by AIs,” which mirrors how academics are tackling AI-use by students in the classroom. Stetskov mandates that every pull request explain what changed, why, the change type, and include before-and-after screenshots — forcing the kind of reflective practice that AI tools otherwise let you skip. The CMU study’s authors note that the work of maintaining simple, healthy code still falls to humans, and suggest running SonarQube scans on every PR before merge.

The optimistic framing is that legions of non-engineers entering software development creates opportunities for professionals to develop new, more inclusive practices — figuring out how vibe-coded contributions can coexist with rigorously engineered systems. That’s a genuinely exciting possibility, but it requires acknowledging that the threat isn’t the technology. It’s the management pattern that treats every new tool as an opportunity to remove the humans who understood why things worked. Defence manufacturing lost its knowledge because consolidation eliminated redundancy. Software engineering is losing its knowledge because extractive management eliminates slack. The tool changes; the disease is the same.

Sources

- The West Forgot How to Make Things. Now It’s Forgetting How to Code — Denis Stetskov, Tech Trenches

- Hacker News discussion (756 comments)

- AI Copilot Code Quality: 2025 Research — GitClear (211M changed lines)

- Coding on Copilot: 2023 Data — GitClear

- AI Is Still Making Code Worse: A New CMU Study Confirms — Rob Bowley on CMU’s difference-in-differences study

- State of AI vs Human Code Generation Report — CodeRabbit (470 PRs)

- AI coding tools make developers slower, study finds — The Register on METR study

- AI may cause you to forget some skills — The Register on Gartner Symposium

- Is AI making us dumber? — The Register

- AWS CEO says AI replacing junior staff is ‘dumbest idea’ — The Register